Technology

‘Extinction from AI’: Global leaders sign stark warning about artificial intelligence

Human extinction. Think about that for a second. The erasure of the human race from planet Earth.

That is what dozens of AI industry leaders, academics and even some celebrities sounded the alarm about on Tuesday.

They signed a one-sentence open letter to the public which called for reducing the risk of global annihilation due to artificial intelligence, arguing that the threat of an AI extinction event should be a top global priority.

Watch the latest News on Channel 7 or stream for free on 7plus >>

WATCH THE VIDEO ABOVE: World’s top artificial intelligence experts issue stark warning on artificial intelligence.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war,” the statement published by the Centre for AI Safety said.

The statement was signed by leading industry officials including OpenAI CEO Sam Altman; the so-called “godfather” of AI, Geoffrey Hinton; top executives and researchers from Google DeepMind and Anthropic; Microsoft’s chief technology officer Kevin Scott; internet security and cryptography pioneer Bruce Schneier; climate advocate Bill McKibben; and the musician Grimes, among others.

The statement highlights wide-ranging concerns about the ultimate danger of unchecked artificial intelligence.

AI experts have said society is still a long way from developing the kind of artificial general intelligence that is the stuff of science fiction — today’s cutting-edge chatbots largely reproduce patterns based on training data they’ve been fed and do not think for themselves.

Still, the flood of hype and investment into the AI industry has led to calls for regulation at the outset of the AI age, before any major mishaps occur.

The statement follows the viral success of OpenAI’s ChatGPT, which has helped heighten an arms race in the tech industry over artificial intelligence.

In response, a growing number of politicians, advocacy groups and tech insiders have raised alarms about the potential for a new crop of AI-powered chatbots to spread misinformation and displace jobs.

Hinton, whose pioneering work helped shape today’s AI systems, previously told CNN he decided to leave his role at Google and “blow the whistle” on the Technology after “suddenly” realising “that these things are getting smarter than us”.

Centre for AI Safety director Dan Hendrycks said the statement first proposed by David Krueger, an AI professor at the University of Cambridge, does not preclude society from addressing other types of AI risk, such as algorithmic bias or misinformation.

Hendrycks compared Tuesday’s statement to warnings by atomic scientists “issuing warnings about the very technologies they’ve created”.

“Societies can manage multiple risks at once; it’s not ‘either/or’ but ‘yes/and,’” Hendrycks tweeted.

“From a risk management perspective, just as it would be reckless to exclusively prioritise present harms, it would also be reckless to ignore them as well.”

-

Technology4h ago

Technology4h agoReason behind social media slowdown in Pakistan revealed

-

Technology4h ago

Technology4h agoChinese scientists detect universe's highest energy gamma-ray line

-

Technology10h ago

Technology10h agoAI to replace 85 million jobs by 2025: WEF report

-

Technology10h ago

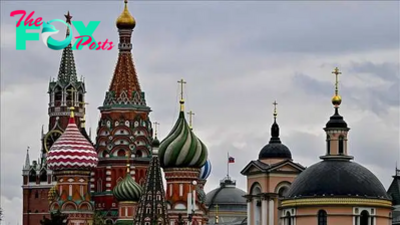

Technology10h agoHistoric Russian Kremlins: Preserving heritage through architecture

-

Technology13h ago

Technology13h agoSave $300 on the new OM System OM-1 Mark II

-

Technology13h ago

Technology13h agoStrange compound used to treat cancer can extract rare-earth metals from old tech at 99% efficiency

-

Technology16h ago

Technology16h agoAI-remastered documentary honors legendary female athletes

-

Technology16h ago

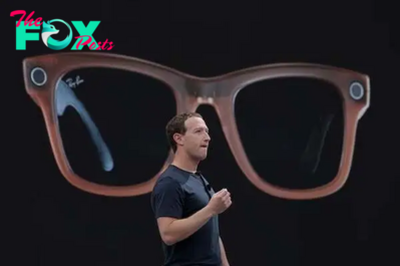

Technology16h agoZuckerberg opposes locking China down on AI technology